In the second week of January, a visit to my parents’ home brought the delight of their garden into sharp relief. I love their garden. When conversation is easy it adds a bloom, when difficult it serves a useful distraction. We swiftly turned our attention to comparing the winter’s floral arrangements. Despite the challenge of soot-laden air, the right soil mix and sunlight had coaxed the chrysanthemums into a splendid bloom.

As is often the case in familial interactions, we found ourselves embroiled in a light-hearted dispute over the trivial – debating whether this year saw a dominance of pink over white in the garden, possibly an example of Mendel’s genetic laws in action. To resolve our quandary, my mother produced her phone, deftly navigated to her photo gallery and unveiled a surprising truth: we hadn’t planted chrysanthemums the previous year at all. This revelation prompted us to ponder how the flowers appeared, how we could so clearly misremember, and the indispensable role our digital records play in anchoring our memories to reality, for, “people remember things wrong, sometimes they hallucinate….”.

In the era of AI, marked by a pervasive atmosphere of mistrust, it’s become second nature for us to question our memories until they’re validated by digital records. Yet, lurking beneath this practice is a nagging doubt — could our devices be developing minds of their own? This concern gains weight as the term ‘hallucinate’ finds its way into discussions about generative AI with increasing regularity. These machine dreams captivate us with their richness, detail, and allure, crafting fictions more enticing than reality itself. Among its notorious offspring is the deepfake, capable not only of fabricating facts but also of duping human intuition.

Is it real, or is it fake? Who is to say? A, “deepfake” real enough to satisfy the sexual release of repressed Indian men? But doesn’t it take very little for arousal? Okay, wrong example. Okay, since the elections are approaching, maybe political misinformation to target rivals? Well, even here, many, if not most, will separate fact from fiction based on the ideology they worship. Again, wrong example. I guess, what I am trying to convey is a feeling of suspicion. Rather than merely making believe into something that did not occur, I believe the deeper harm may be the total erasure of shared trust.

The instinctive reaction to generative AI is far from the delight felt on the release of a new Iphone. Amidst calls for regulation, debates oscillate between the necessity for oversight and the valorization of ‘innovation’ in a neoliberal context. Caught in this ping-pong are the nuanced and oft-overlooked aspects of public policy. While some advocate for the potential benefits of deepfake technology for everyday people, such as educational uses, these discussions tend to get lost in the noise. To make this tangible, in many TV panel discussion shows when the Rashmika Mandana deepfake sparked a maelstrom, I cited the educational benefits of deep fake technologies for, “rendering and recreating the Dandi March in 4K”. But where is this clip? It does not exist, yet. Also, a part of me is filled with trepidation on its rendition in a climate that enforces the cultural interests of the hindu nationalism.

The Dandi march illustration was my attempt to showcase a wider social benefit. But even linking Deepfakes to an educational, or social use, did I unwittingly instrumentalise it’s very human, even democratic and cultural potential? Stacking benefits against harms. Weighing the scales. After all this is what lawyers and policy planners are trained to do. But, isn’t this a myopic frame for the richer potential and ingenuity to shape technology? For people to use it in their own way without harming another?

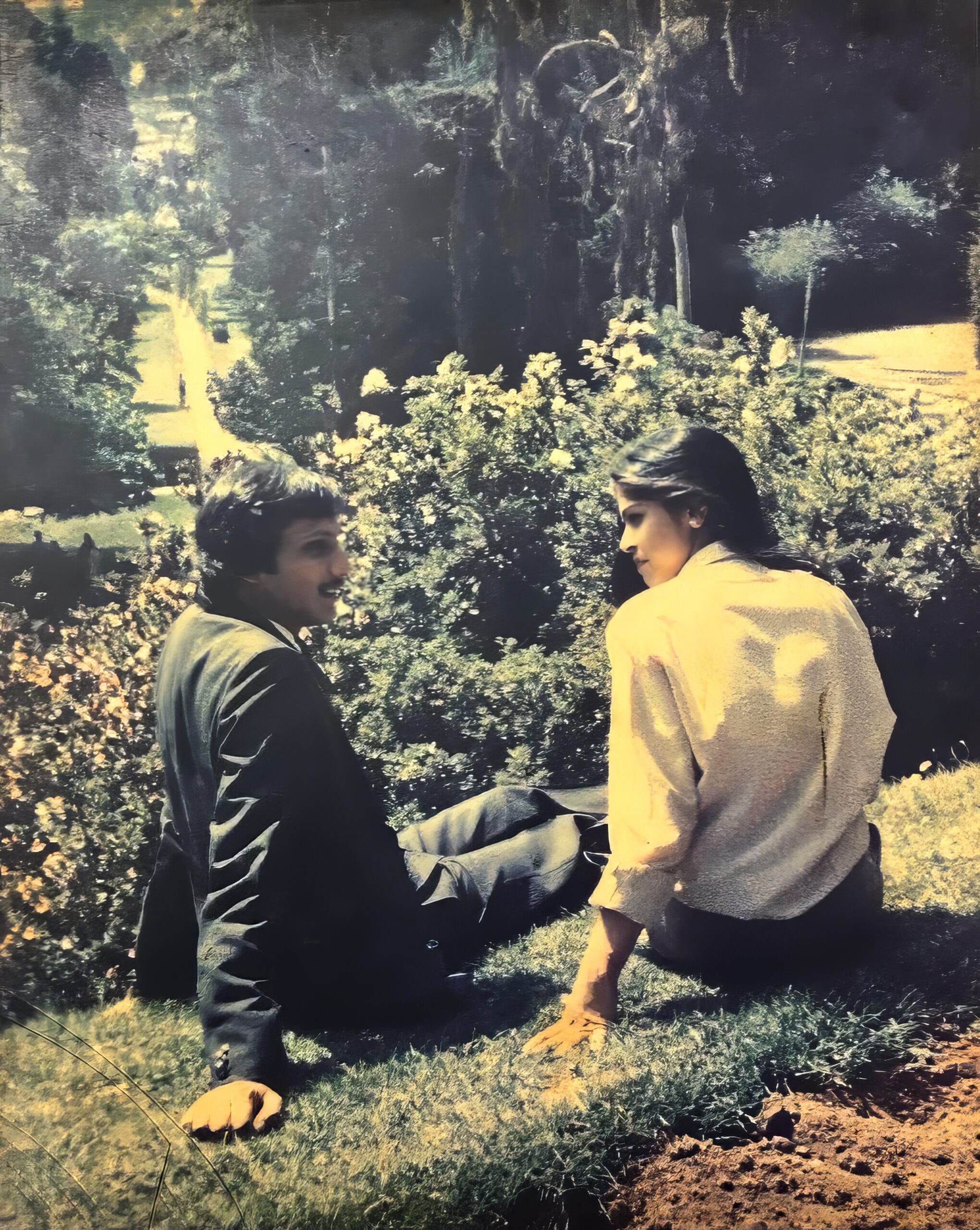

These questions would have never emerged if I would not have encountered CAMP’s exhibit, “Deepfacts+++”. Exhibited late last year at the historic Chemould Prescott Road. This is a 15 minute audio-visual presentation of a conversation between the famed gallerists Khorshed Gandhy and Kekoo Gandhy who passed away a few years ago. Here, wife and husband, come to life once again to fact check commentary on their lives with the characteristic nok-jhok of an old Parsi couple. As they reminisce over their lives they fact check account after account, some from Jerry Pinto’s book, “citizen gallery” with a sense of childlike play. This seeming inversion of deepfakes as tools for fact checking may seem like an obvious artistic provocation. However, to me it held profound meaning. This only came to be revealed when I asked the artists, Shaina and Ashok, how they brought Khorshed and Kekoo back to life.

In two calls arranged by Dhamini Ratnam (who introduced me to the exhibit and has written about it here) they explained the exhibit departed from their usual artistic practice of public exhibits as a gift to the Gandhy family and the Chemould gallery. However, they continued their use of art practices to pose questions on the social assimilation of technologies. Here, they often use the very anxieties that arise when a new technology impacts society to pose questions. The effect is far richer than a simple cost-benefit analysis.

To implement this vision for, “Deepfacts+++”, they scripted on the basis of research and personal interviews with family members. The actors who voiced them were known to the family and even included a relative. All through the process of creation they talked over a family whatsapp group with emojis and inside jokes. It was a meaningful exchange that went beyond legal notions of fact-checking and consent in the production of deepfake AI that recreated the persona of the famed gallerists. This invocation of souls is successful in invoking life. When Kekoo asks Khorshed why she is not wearing her glasses, pat comes the reply, “I got lasik. You need a haircut”.

Here, the human act of production of, “Deepfacts+++” overpowers the technical sophistry. The technical sophistry, while impressive, is incidental. Here Studio.Camp’s use of the open source DeepFace app hosted on Github, the video archives used as training data, and the voice overs by family members serve a larger goal. To me, the exhibit provides a real, tangible instance of Deepfake technologies as a form of freedom of expression. It provides some comfort where every wave of technology seems a necessity but one that is a widget in a larger dystopia. Their artistic processes helped calm some of my fears, if only for a moment. More importantly to know that if we get this right Generative AI it can even help us to trust one another. Where, deepfakes can be deepfacts.

The next time I visit my parents, they may share this article on their society and alumni whatsapp groups. I considered adding a childlike provocation by writing by the following ending. How would they react if I would ask their thoughts on visual interviews for training data for a Deepfake? For, tempting is the thought of wielding the smartphone as a wand for eternal comfort. Is it real, or is it fake? Who is to say? Just a tap to hear familiar voices and enter petty disputes over chrysanthemums and petunias. No matter how many winters may come to pass.

Leave a Reply